How My School's Use of AI indexed Pornography on Their Official .edu Site

by Addie Noyes

This is the story of how embracing AI led to my university accidentally indexing explicit content on their website.

Every year, we give tee shirts our to all of the students in our incoming class. The design of these shirts was originally a contest, where students could submit their designs and maybe get a prize and bragging rights if theirs was chosen. Predictably, this incentive was not appealing to talented artists on campus that worked for commission, not exposure. The quality of the designs were not up to SGA's standards, so they decided to take a new route.

It started with an elephant design.

I used this design way back in 2021 when I was a senior in high school. I already had friends on campus, and I was asked by a robotics team to create a usable logo for competitions, as they had a deadline coming up and were dealing with exams. I created a quick design, using an open-source image from Pixabay. It was a geometric elephant, riffing on the elephant mascot of my school. I added a circuit-inspired design to it in MS paint and called it a day.

Before I sent this image off, I checked the content license on the base image that I used. A content license is like copyright: it indicates where and when you can adapt a piece of media. Most images on the internet are under some kind of content license (either by an organization like Creative Commons or provided by the website you publish media on). This content license for this image is linked at the bottom of this post, but the gist is this: if there is no artistic merit added to the images on their website, they cannot be used for commercial use. You cannot sell anything with this image on it.

I didn't think much of this at the time, knowing my design was non-commercial and fair game as a logo for a school club. I sent the image to my friends on the robotics team, and honestly forgot about the whole thing.

Fast forward to my sophomore year of college. The new freshmen, the Class of '27, were establishing themselves on campus. Their class tee shirts had that elephant on the back of them. Students did not pay for their shirts, so it was still non-commercial. I cracked a few jokes about the school stealing my ideas, then returned to the much more immediate concern of being an engineering student barely staying on top of work.

Then, it popped up again my Junior year. The Class of '28 also got this elephant on their tee shirts, but this time, every student's shirt was different. They got to AI-generate an image to fill the shape of the elephant on their shirt before it was printed and mailed to them. There was some discontent over this at the time, and students angry they couldn't opt out of this. They had to generate an image if they wanted the shirt. A few tried to use the prompt 'white' to just get a white shape with no image but were blocked by a filter. 'Racial terms' could not be inputted.

I thought that would be the end of that. But then they used that design a third time. This time, they weren't giving students a shirt. It was entirely commercial.

Later that year, my school announced their collaboration with the startup Drophouse AI to create those shirts. They were proud to also announce their launch of a merchandise shop using Drophouse AI...where you could purchase a $59 hoodie with AI art and that elephant design.

A few students were also defending this company because it was founded by alumni. It was mentioned they worked very hard to set up their website and work out all the bugs, which I didn't doubt at the time. Creating startups and breaking into a new market is difficult. However, their use of a non-commercial image in their design was not just in poor taste; it could legally get them in hot water.

I sent them a message on Instagram (after figuring out their 'support email' did not exist). I advised that they take down the design, sending them the content license of that image. I wasn't sure if my student government association sent them that image or if they chose it, and I made it clear it didn't really matter. I am yet to get a response to that message.

I also sent an email to our student government board, explaining the issues with using this image, and encouraging them to return to using designs created by students. I included the portfolio of an artist on campus who makes incredible work, just so they could see what they are missing. I was sent back an apologetic message, and I thought that was the end of it. This is where the pornography comes in.

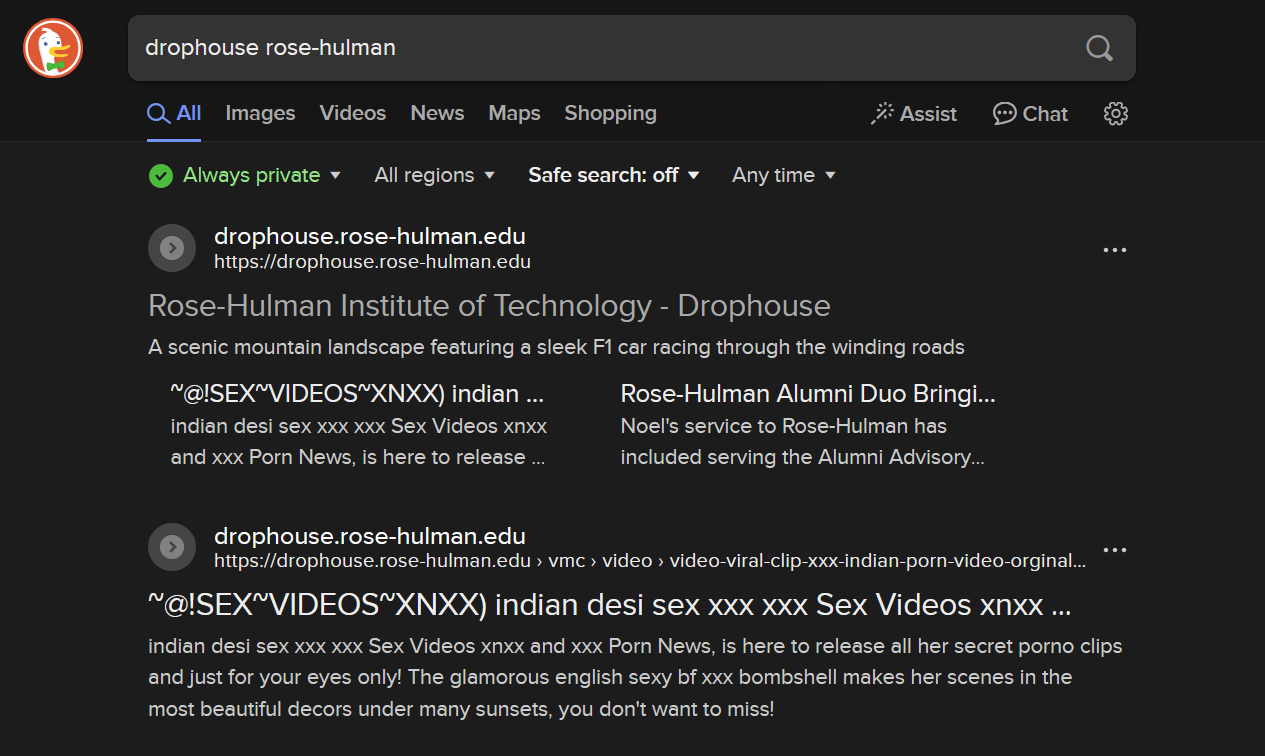

A couple weeks later, I checked in on the Drophouse website to see if they had removed the elephant design. I recently switched to Duck Duck Go, a search engine that does not filter explicit content as thoroughly as Google. I was greeted with this:

That is my school's .edu address. This company somehow managed to index pornography onto their alma mater's website. When I tried to open any of the links shown, it could not connect to the website's server. When I later brought this to my school's IT department, they already knew about it and had tried to scrub it. My browser just hadn't updated to reflect the changes. They also let me know that Drophouse's contract had been terminated.

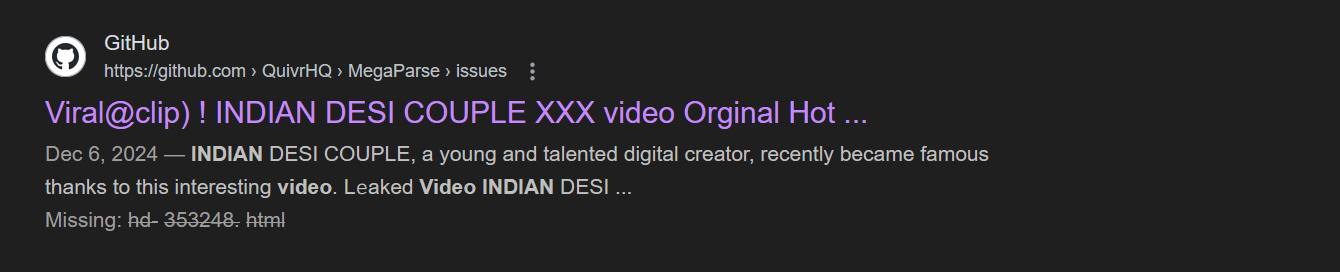

How did this get here? When I searched the pornography's title, I found the original website it was published on...and a GitHub file.

For those unfamiliar with coding terms, GitHub is a website where programmers can store and share code. This file had been created by a team completely unrelated to Drophouse AI, they just imported the code into their website. What was its purpose? An interface (called an API call) to OpenAI, the massive company that has repeatedly been accused of stealing personal data for their training models.

So, not only did this startup use a design commercially they did not have the right to use. They didn't even code the primary feature of their website. They pasted it into their own code and didn't bother to check it thoroughly enough to catch that a developer had included a porn title in those files, likely an accident or a practical joke.

So. What's the lesson from this story? Well, you can debate the value of language and image generation models. But it seems the people that use this kind of tech will likely be careless in all aspects of their work. They easily could have caught these issues and still minimized their company's startup time.

Was what they did with the elephant design legal? That's a grey area. According to the content license, they have a right to use it commercially if there is artistic merit added to the final product. What is artistic merit? Well, in this case, anything you can prove in court. It's not a cut and dry definition. Almost every time it has been discussed in the U.S. legal system, the motivation was to determine if explicit and pornographic content can have an artistic purpose (a bit ironic in this case). Does adding an AI art overlay onto an image count?

I think these founders would probably argue that it is, and I don't think that is an unjustifiable position. However, this leads to an important discussion about the use of AI to create images.

Advocates for using AI trained on data from the internet I think see other people's work as just...data. If they think AI content has artistic merit, then they must think that the data it is trained on has merit as well. If someone sees value in another's work and takes it without giving credit, that is theft. Even if these shirts have some artistic value to them, this company still profits from stolen work.

Maybe it's not surprising. Users of AI art can talk about the technological marvel of it, and I'd agree with them. There are many incredible and useful things you could create with these algorithms and supercomputers.

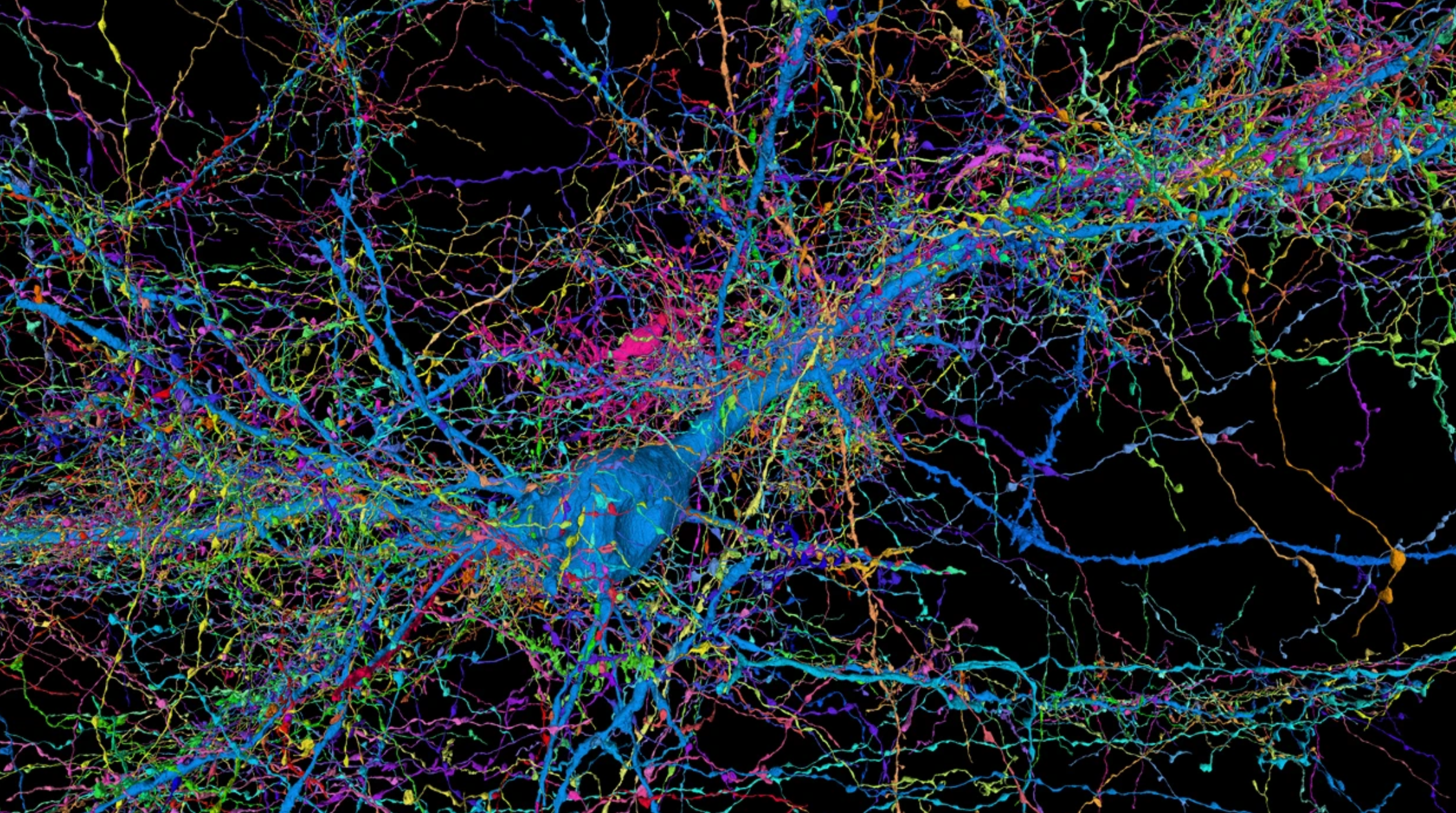

However, with the "AI boom" supposedly starting, do we put those resources towards precise mapping of the brain, like the image above? For automating certain data-based tasks? For beautiful generated designs that can visually represent abstract mathematical concepts?

Or do we allow these resources to go towards AI slop, bots, and targeted advertising filling our lives to the point we can't trust any image or text to be real?

What does Drophouse AI actually do? They didn't code the AI gimmick that they claim makes their product unique. After some digging, a friend found proof that they didn't code the majority of their website: instead, they hired a CS Senior design team at my university to do it instead.

I'm certain they don't print their products in-house. They didn't create any of the designs for their shirts. They have a very simple buisness model, their only task is setting up a webpage for each 'drop' and ordering shirts. Yet, they somehow managed to fumble it.

This entire fiasco proved to me what I already suspected. There are many incredible things you can do with AI, but when these kinds of people look at art and technology, they don't look critically or creatively. They look carelessly.

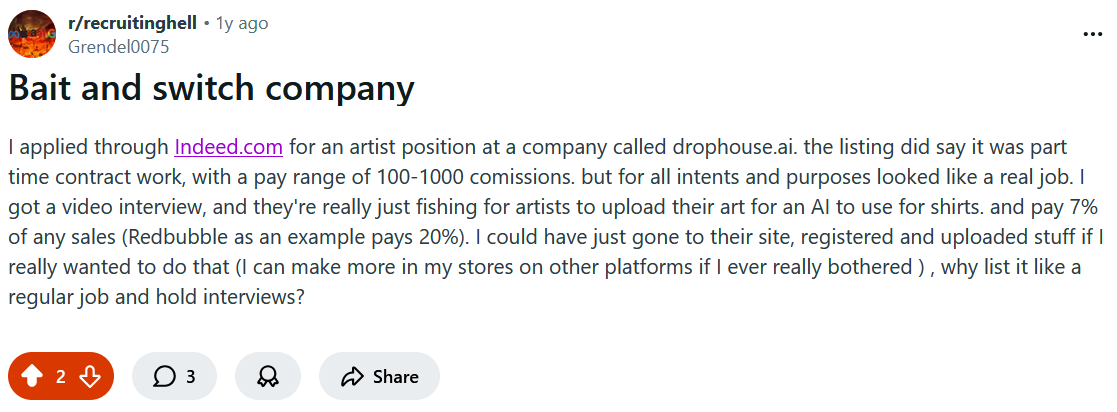

Even after posting this write-up, I came across a a reddit post by an artist on r/recuiting hell about Drophouse AI titled "bait and switch company". Drophouse posted a job listing on Indeed seeking a graphic designer, promising a $100-1000 pay range for commissions. However, when this poster got an interview, they admitted they didn't intend on hiring anyone. Instead, Drophouse encouraged them to submit their art directly to their website. This art would then be combined with AI art, and the artist would receive a commission from sales of that design.

Looking at the "Artist Section" of Drophouse's "Careers" page, they outline this process. The problem with it? Drophouse offers well below market value for commissions, only 7%. Though this method of obtaining art is legal, it still shows an unmistakeable disrespect for the work that designers and artists do.

On the same page, they mention that any work submitted to Drophouse must be original. It's probably unsuprising the example design next to that disclaimer is the same elephant design that they stole.

Resources:

[2] QuivrHQ GitHub: Opiniated RAG for integrating GenAI in your apps

[3] 2023 Class Action Complaint against OpenAI

[4] A Review of Art Law in the Supreme Court, by Kathryn MacMillan

[5] How AI could lead to a better understanding of the brain, by Viren Jain

[6] A post on r/RecuitingHell from an artist about DropHouse AI

[7] Rose Show booklet including DropHouse Website as a project